Human neurons grown in a lab dish now inhabit Cortical Labs’ CL1 platform, no longer just batting a Pong ball but driving a Doom marine, fusing wet biological tissue with deliberate, score-chasing gameplay.

You might watch a 1993 shooter feed from that network and forget you are looking at living cells. What appears as twitchy reflexes comes from spikes of neural activity, translated by software into movement, turning the CL1 into a biological computer demo that runs Doom on unusual hardware via experimental Cortical Cloud streaming, with published GitHub source code exposing every layer.

From donor cells to a chip-fed neural network built to stay alive for months

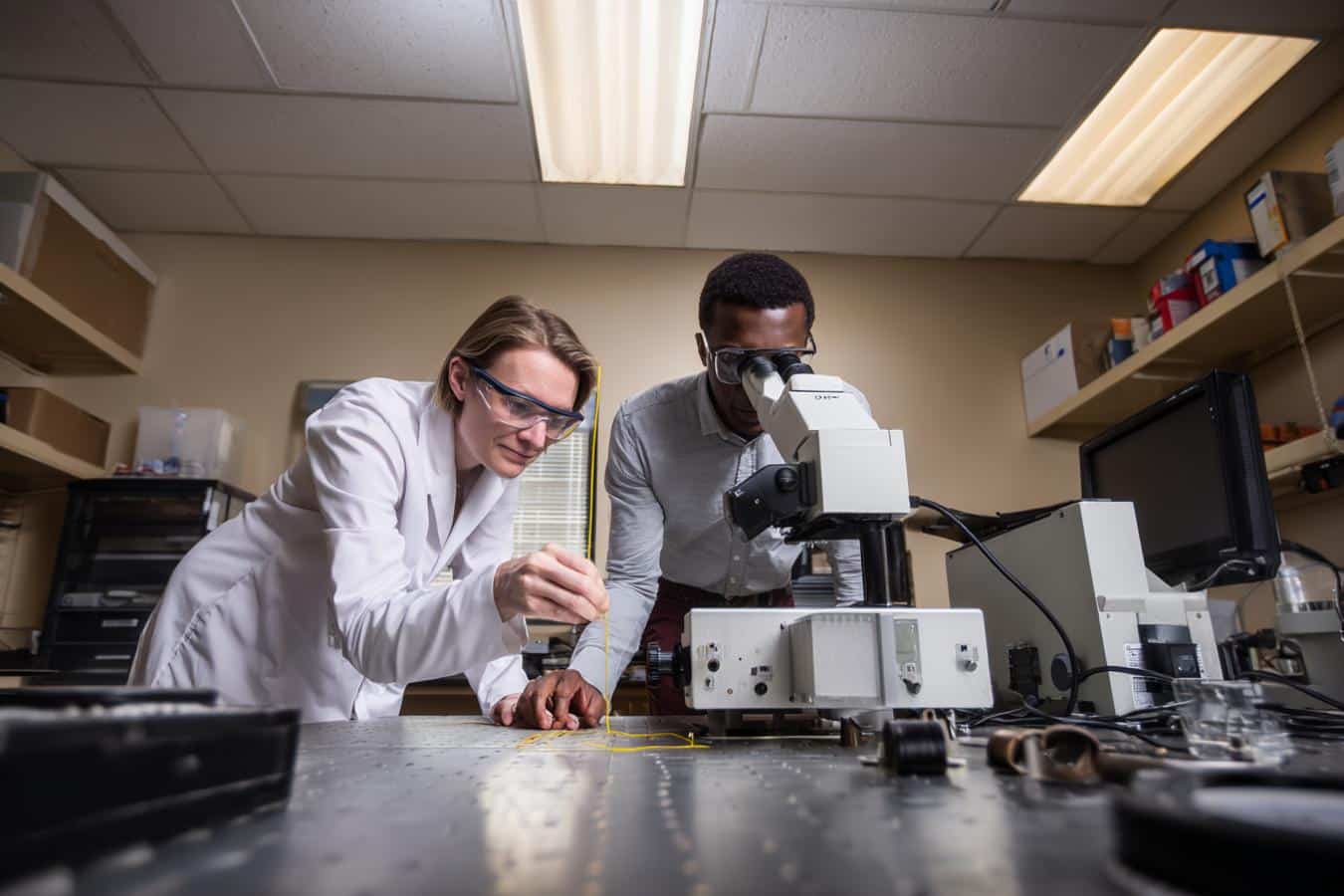

Cortical Labs cultivates about 800,000 human neurons on each CL1 chip in Australia, yet the process begins much earlier inside the lab. Technicians reprogram cells taken from adult skin or blood donors into induced pluripotent stem cells, then differentiate them into cortical neurons. These cultures retain distinctive human genetics while remaining anonymous, turning a medical sample into the raw material for a new kind of computer.

After maturation, these neurons are spread across a 59-channel planar electrode array built from metal and glass, allowing the CL1 to read and stimulate activity throughout the culture. Around this living layer sits an internal life-support system that regulates temperature, gas mix, circulation and waste filtration, keeping the neural network stable enough to operate continuously for up to six months on a single cartridge.

Running Doom on a biological computer, and what that says about wetware performance

On the CL1, Doom’s 1993 demons reach the neurons through Cortical Labs’ Cortical Cloud platform, where code interfaces with the culture via the biOS deployment layer, with open tooling mirrored on GitHub. Visual and state data are translated into electrical patterns across the 59 electrodes, while returned spikes steer the game in real time under sub-millisecond latency. Learning hinges on carefully tuned reward-and-punishment feedback that sculpts firing patterns into something resembling a fragile skill.

Back in 2022, the DishBrain prototype learned Pong in around five minutes, a challenge that would take a standard deep reinforcement learning model about 90 minutes, suggesting that wetware can be startlingly data-efficient. The commercial CL1, shipped in 115 units in 2025 at $35,000 each or $20,000 in 30-unit racks, draws only 850 to 1,000 watts of power draw per rack while running these experiments.